As 2025 ages out, let’s assess all that our AI and Faith community has accomplished together in the past 12 months to steward AI for beneficial outcomes, seen through the lenses of our five faith traditions. Along the way, let’s also consider lessons learned in this eighth year of our work in this fastest-changing of contexts.

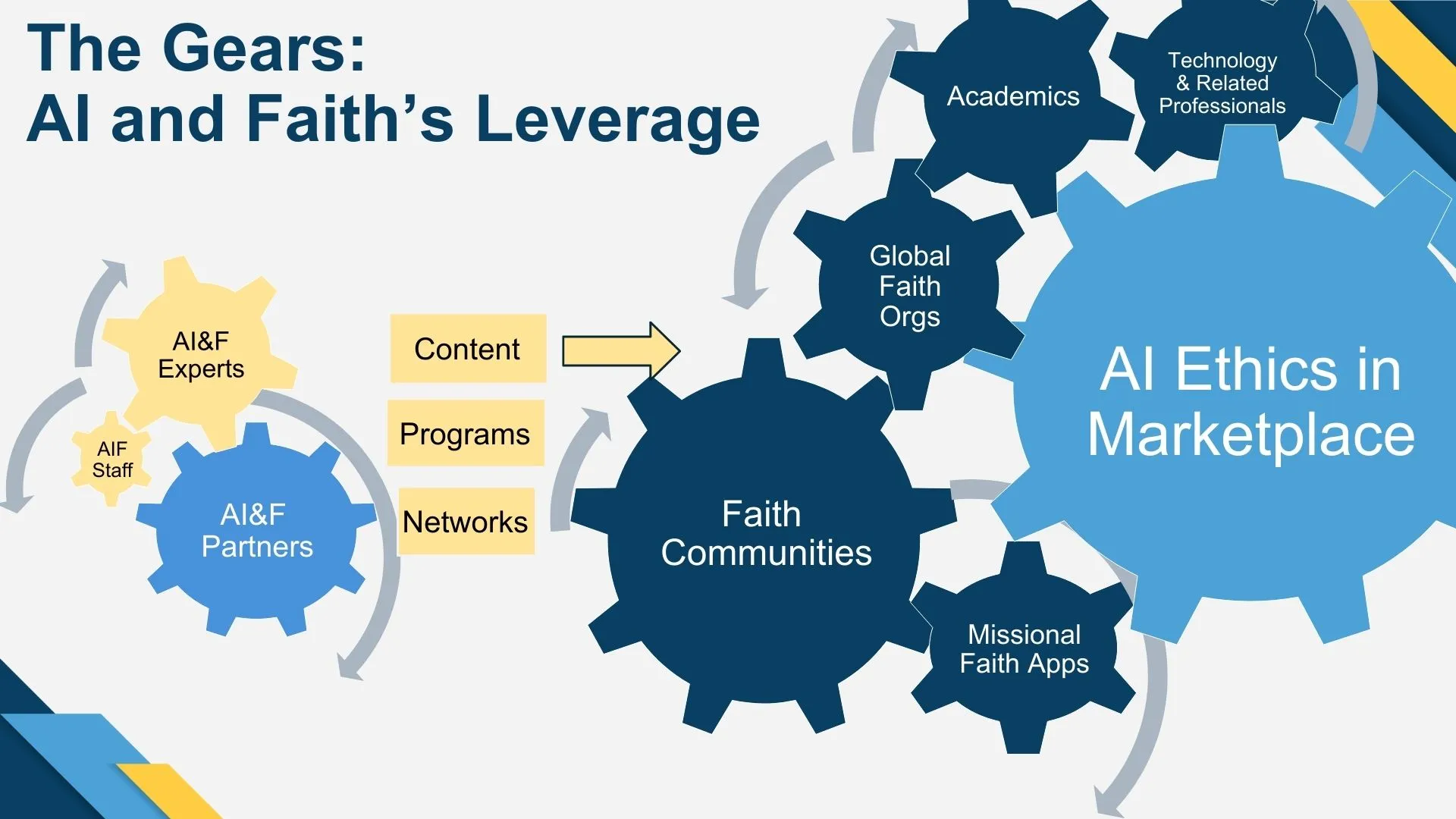

Last year at this time, I introduced this graphic depiction of how we leverage and target the expertise of our Experts, Partners, and Members into five sectors of society, each of which is essential to determining whether we end up with AI for good or destruction:

I emphasized then how this graphic illustrates our superpowers of collaborating and catalyzing across faith traditions and the entire spectrum of vital disciplines we have gathered – from sophisticated theologians and ethicists to AI professionals and a wide array of business leaders, scientists, academics, and other professions. Now let’s consider this graphic as a depiction of who we are and how we have worked this year.

Job 1: Gathering and Sharing Knowledge within our Community. The left side of this graphic shows the key elements of our community: the two larger gears for Experts and Partners, and that small but growing gear for Staff. To their right are the immediate outputs of our work: content in the form of developments and analysis of responsible, ethical, beneficial AI, shared in our own media, through conferences and programs, across the networks of our experts and partners and, ultimately out to the organizations and people we seek to educate and influence.

From our beginning, Job 1 has meant growing this broad spectrum of expert people and partner organizations: highlighting their work across our community, building relationships of trust internally, and extending their knowledge, resources, ideas, and energy out into the broader AI ethics conversation. Here are some of the ways we continued this work in 2025.

- Our 18 Research Fellows led by Research Director Mark Graves presented their work in monthly meetings and prepared proposals for grant applications to fund deeper work. Example projects include Compassionate AI, and Religious Voices and Responsible AI.

- With the help of our Programs Team, we held 24 internal gatherings of experts as “Town Halls”, “Salons” and informal “Brown Bag” discussions, to share information, build energy, and generally make the case for the relevance of faith-oriented values and ethics to achieving responsible AI. Our Podcast Team translated some of these and other one-on-one interviews into 25 podcast episodes, featuring AI&F experts and partners from all over the world. As a benefit to our donor Members and Professional Members, we have provided special access to some of these gatherings.

- Our Editorial Team published in our monthly digital Newsletters more than three dozen original articles, and many, many more news items and articles originally published elsewhere highlighting the work of our experts and partners. Their work included bimonthly themes like AI and Law, Warfare, Grief, Environment and Bioethics. An example of the significance and compounding reach of our experts’ work is in this single News item in the August issue. We also posted all this to our social media pages at LinkedIn and Facebook.

- We have continued to organize our experts into Affinity Groups for further sharing and cross-fertilization, moving beyond our Coordinating Catholic Connections Affinity Group to bring together our other faith traditions this year. Such groups help us identify and advance faith-specific projects and opportunities for impact that may be especially relevant geographically, socially/politically, or for specific faith practices and beliefs.

- We have also launched a “Story/Media” subject matter affinity group to focus on the power of narrative across a wide array of media, building on the considerable number of appearances by our experts in print, digital and online outlets this year like the Christian Science Monitor, Politico, Christianity Today, and ABC News. Other subject matter groups are in the works as well.

- In addition to the 25 new experts recruited by our Network Team across four countries, we added six new Partner Organizations, including our first international partners in the UK and Switzerland. AI&F and these partners mutually benefit from complementary areas of expertise, shared financial and other resources, and diverse cultural and multidisciplinary perspectives.

That small “Staff” gear between the Expert and Program gears grew twice as big this year. Like the smallest gear on a three-speed bike, this gear actually creates impact far beyond its size. Thanks to a generous staffing grant of $360,000 from the Murdock Trust in April, paid over three-years on a declining balance basis, we were able to hire our first full-time Executive Director, Greg Cootsona. In our upcoming January Town Hall and Newsletter, Greg will share his fresh perspective on our strategy, methods and impact, based on his own considerable nonprofit experience and connections and three months of interactions with our Board, Advisors, Team Leads, other staff and key donors

Job 2: Creating Impact. Important as such internal work is, we continue to grow our community and strengthen its knowledge base and connections for a broader purpose; as our tag line puts it, “to bring the world’s oldest wisdom to the world’s newest opportunity and challenges.” The five sector gears to the center/right of our leverage machine are the primary parts of society, business, and culture we seek to influence, toward the ultimate end of wisely stewarding the creation and deployment of AI tools for human flourishing. Our goal is to have our community powerfully turn these five “sector” gears and ultimately the giant AI Ethics Conversation beyond them to achieve responsible AI as measured through faith values and ethics. The best way to understand and track our work on a regular basis is to review the Strategic Projects on our website where some of our key work and methodologies are highlighted and updated.

Within these five sectors, we work at three levels of engagement: 1) global leaders of faith, business, society and culture; 2) tech professionals working on AI products; and 3) grass roots faith leaders and their followers. Each level requires different leveraging methodologies: for example:

- using our experts’ knowledge, reputations and connections to network effectively with highly resourced and influential sector leaders;

- formulating measurable responsible AI standards for tech creators; and

- developing curricula for faith community leaders.

A key lesson we’re learning is the importance of understanding the different speeds at which these levels typically work, i.e, tech professionals move fast, global leaders more slowly and iteratively but with urgency to deal with AI’s dynamic opportunities and threats, while faith communities and their leaders usually move at the slower speed of inclusion. Accordingly, we’ve been working to set our “leveraging machine” at the right speed for the appropriate methodology. To extend the bike analogy, It’s like we’ve moved from a fixie to a three-speed, with the hope that with increasing staff and experience we can get to a ten-speed as soon as possible and make the most of the terrain we’re occupying. It’s complicated but we and our partners are learning quickly when to shift gears together (and how not grind them!)

So how have we especially leveraged our resources this year at appropriate speeds for maximum impact? Among our many activities, here are several examples I’m particularly excited about.

- Receipt of a shared grant totalling almost $500,000 with our partner the Institute for Security + Technology to engage faith leaders in mosques and churches on the threats and opportunities of AI and how their tradition’s ethical guidance can be applied to the development of safe and beneficial AI; equip leaders with curriculum and resources to teach their communities; and educate congregations on how AI impacts society, ethics, and daily life.

- Among tech professionals and faith leaders, our Trust and Accountability Project has been advancing the critical need for measurable, certifiable standards to bring responsibility to AI applications used in faith traditions. We seek to set an example for the giant AI ethics conversation by harnessing the values of faith traditions, and helping faith-oriented tech professionals integrate them into the development of tools for evangelization, teaching and preaching, spiritual formation, faith community life, and other spiritual disciplines. Here we’re finding the clash of speed gears and the need for a quality “gear shifter” is every bit as much a challenge as in the “move fast and break things” quality of secular AI development.

- Another way we’re addressing that potential gear clash is our continuing contribution to tech creator conferences. We provided speakers and a workshop on responsible AI in Christian ministry applications at the Missional AI Conference in Dallas in April, the largest gathering of Christian app creators in the world, and convened a follow-on design sprint in Dallas for 7 key potential sponsors of a standards-setting consortium in October.

- We also provided speakers and detailed workshops at major gatherings of faith leaders like the World Evangelical Alliance’s 2025 General Assembly in Seoul, where we brought 13 faculty from six countries to a 7 hour workshop modeling four key AI ethics subjects. Other significant conferences where our speakers stood out included the Lilly-funded Notre Dame Summit on AI, Faith, and Human Flourishing in September; Hamad Bin Khalifa University’s AI Ethics conference on Convergence of Technology and Diverse Moral Traditions in Qatar; the annual meeting of the American Academy of Religion in Boston, as well as conferences and programs in London, Salt Lake City, Washington, DC and elsewhere.

- Members of our Catholic Affinity Group span a dozen Catholic AI institutes and organizations. Fr Paolo Benanti, a top AI advisor to the late Pope Francis and the architect of the Rome Call for AI Ethics, joined us as an Advisor in the fall of 2024. Our Catholic experts have continued to influence the Vatican’s reach through foundational writing, Vatican conferences and discussion groups across the Catholic world.

- Longstanding efforts of two of our Advisors at Microsoft, Glen Weyl and Ben Olsen, led to the formation of Microsoft’s new tech industry-leading Technology for Religious Empowerment (T4RE) Initiative,which sets a new standard for how technology can strengthen understanding, dignity, and collaboration among global faith communities. The initiative produced a summer long research survey of faith leader attitudes and uses; a panel on building dialogue between major technology companies and faith leaders across faith traditions for the National Faith ERG Conference in Washington, DC; and the Faith, Family and Technology Network, a highly agile, informal coalition of sophisticated think tank representatives, academics, researchers, funders, and policy experts meeting weekly to preserve childhood development and vital family and other human agency and relationships, regularly including more than a dozen of our experts.

- In our role as the only overtly faith-oriented partner among the Partnership on AI’s 130 global partners, we’ve been invited to name a representative to a new PAI Steering Committee for AI and Human Connection. Our new Advisor Neylan McBaine is taking on that role for us, joining the half dozen or so other AI&F experts who have participated in varying roles with PAI since its commencement.

Finally, this report would not be complete without thanking all the Experts, Partner representatives, new AI&F Members, Professional Members, and other donors who have made all our accomplishments possible this year. The terrific individuals I’ve named above are only a fraction of the key leaders and project drivers who turn our “gears”. I’d also like to especially call out:

- Our hard working Board of Directors Rabbi Harris Bor, Mark Graves (also Corporate Secretary), Lew MacMurren (also Treasurer), Brenda Ng, Thomas Osborn, and Rabbi Daniel Weiner, who have guided us faithfully and wisely through all of the efforts outlined here;

- The four members of our Strategy and Finance Team who advised our board this year, Samuel Chiang of the WEA, Glen Weyl of Microsoft and the Plurality Institute, Quintin McGrath retired from Deloitte Global, and Alan Marty recently retired as a founding partner of Next Venture Partners in Silicon Valley;

- The Team Leads and several dozen members of our Operating Teams working with our outstanding program coordinator Penny Yuen and with Mark Graves, who functioned as our volunteer and valiant COO beyond his compensated part-time Research Director role;

- Our foundation donors the Murdock Trust and Future of Life Institute (looking at you, Will Jones!) and our faithful individual donors, including our annual donor Members, new category of Professional Members, and our many year-over-year donors, a dozen of whom have given especially generously this year in four- and five figures ranging from $2,500 to $25,000.

Thank you all so much for contributing your resources and work to all that we have accomplished together in 2025!

Views and opinions expressed by authors and editors are their own and do not necessarily reflect the view of AI and Faith or any of its leadership.